A Multi Cluster and Multi Orchestrator home lab

Introduction

In this post, I want to write up a summary of a new home lab setup I have built recently. My goal was to have two small, isolated clusters running a different workload orchestrator like k3s and HashiCorp Nomad. Going through the netbooting workshop of Alex Ellis, I’ve learned a lot on how to install and configure everything correctly.

Bill of Materials

- Intel NUC kit NUC10i3FNK + Samsung 970 EVO PLUS M.2 500GB

- 6x Raspberry Pi 4 Model B (4GB)

- 3x Cluster Case for Raspberry Pi

- 6x Pimoroni Fan SHIM

- 6x SanDisk SD cards

- 6x SanDisk Ultra Fit 64GB USB drive

- 2x Kensington UA000E USB 3.0 to Gigabit Ethernet adapter

- 2x Netgear GS105

- 1x Anker PowerPort 10

- Short UTP cables (e.g. here)

- Short USB-C cables (e.g. here)

A note on the power supply (Anker PowerPort): although such compact multi-port USB chargers look nice and tidy, I’ve been warned issues can occur due to not enough power being available. Still, I’m using the Anker charger and I’m actively monitoring the under voltage occurrences of the Raspberry Pis.

The Cluster Cases includes fans, but I preferred the Pimoroni Fan SHIM, as they can be controlled using the software.

Software

Intel NUC

The Intel NUC, running Ubuntu 20.04, is a central component and is responsible for some services. For example, using dnsmasq, it acts as a DHCP server to issue IP addresses to any devices on the two private networks when they boot up. It will also run an NFS service to provide some shared storage for some cloud-native workloads running on the nodes.

Although I took a lot of inspiration from the netbooting workshop, at this moment, I’m not booting the Raspberry Pi devices over the network. Following the workshop step-by-step, I managed to network boot a Raspberry Pi with Raspberry Pi OS, but I haven’t figured out yet how to get it working with Ubuntu as the target OS. In any way, the NUC is ready and has all the software available to get it working eventually, but for now, I want to have my home lab up and running and went back to something I know: flashing good ol’ SD cards.

Raspberry Pi clusters

So, instead of the official Raspberry Pi OS, I took Ubuntu Server 20.04 (64bit) as the base operating for the SBCs. This is because one of the technologies I want to run on my cluster is the Consul Connect service mesh built upon Envoy. Since the latest releases, Envoy supports the Arm64 architecture, so that’s one reason to choose Ubuntu 64bit over Raspios 32bit.

As mentioned in the Bill of Materials, I’m using the Pimoroni Fan SHIM to keep the Pis cool. A custom fanshim controller, written in Go, is in charge of turning the fan on and off when needed, instead of having it spinning all the time. Besides controlling the fan, it also changes the colour of the LED, indicating the current temperature. And you know, LEDs are just too cool for not using them ;-)

Cluster 1: Nomad

On the first cluster, I’ve installed HashiCorp Consul and Nomad using hashi-up

ubuntu@sphene-c1-01:~$ nomad node status

ID DC Name Class Drain Eligibility Status

9606fa22 sphene-c1 sphene-c1-01 <none> false eligible ready

025a6ce1 sphene-c1 sphene-c1-02 <none> false eligible ready

802b7354 sphene-c1 sphene-c1-03 <none> false eligible ready

Cluster 2: k3s

The second cluster has k3s installed using k3sup

ubuntu@sphene-c2-01:~$ sudo kubectl get nodes

NAME STATUS ROLES AGE VERSION

sphene-c2-01 Ready control-plane,master 2d15h v1.20.6+k3s1

sphene-c2-02 Ready <none> 2d15h v1.20.6+k3s1

sphene-c2-03 Ready <none> 2d15h v1.20.6+k3s1

Topology

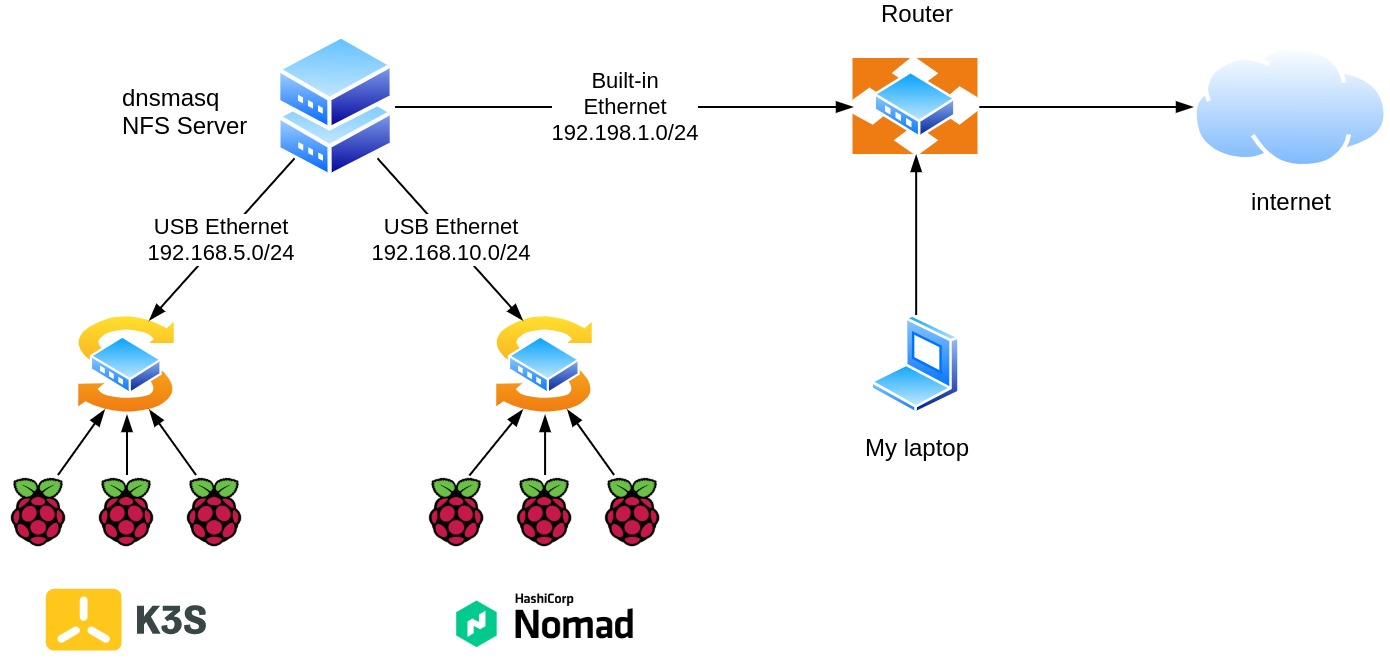

Besides my home network at 192.168.1.0/24, with the Kensington UA000E adapters and the Netgear switches, two additional subnets, 192.168.5.0/24 and 192.168.10.0/24 are added to the network topology.

The server has three network adapters and performs several roles, including routing traffic between the Raspberry Pis and the Internet via my home router.

diagram displaying the network topology of my home lab

Remote access to my home lab

Both the Intel NUC and all the Raspberry Pis have my SSH key installed as an authorized key. Thus, when working at home, I can use the server as a jump host directly for accessing the underlying Raspberry Pi devices.

The server is given a public IP address by running an inlets PRO client and an exit server on a Google Compute instance to access my home lab from anywhere. The SSH server is not exposed directly, but I can add a security layer with the Cloud Identity-Aware Proxy because the exit server runs on Google Cloud. For more details on such kind of set-up, have a look at a previous blog post I’ve written: Control Access to your on-prem services with Cloud IAP and inlets PRO

inlets PRO exit-node protected by Google IAP

Wrapping up

After reading this post, you should have a basic idea of my current home lab installation with two Raspberry Pi clusters. I’m quite happy with this set-up, although I’m still a little bit bothered that I haven’t figured out how to boot Ubuntu, instead of Raspios, over the network yet. But I’m sure I’ll figure it out in the near future.

To wrap up, enjoy this last picture …

all the flashing lights at night

See also:

- Building a Nomad cluster on Raspberry Pi running Ubuntu server

- Consul Service Mesh across a private Raspberry Pi and a public Cloud

- Control Access to your on-prem services with Cloud IAP and inlets PRO

References: